The drive might have been disconnected temporarily somehow (like the slot it's in) when you get to add the new drive you can see if it does it again

If you goto log center and change it to drives it should have Degraded reason like timeout or/and io errors Only qnap can reattach a incorrectly ejected drives (bitmap has to been enabled prior to it happeningzbitnap is off by default as it slows writes down by 5-20%) I was under the impression that if it was a false positive, the degration would go away. But now the drive shows as healthy, but it's unclear to me if it still has whatever data that was on it and if it can be readded without wiping it. I didn't remove it from the pool, I guess it got removed due to it being degrated. I guess this is the part that confused me. The rest is misc stuff like media that could be reaquired and some work stuff that is already backed up elsewhere. I don't have any ability to back up the entire Synology Drive as it's too much space, but I can backup the small amount of very important data (documents and photos, etc). I guess I will wait for the new drive, insert it, and repair it but I can't tell if I have a bad harddrive or not if all 4 show as healthy. test on all 4 drives and they all show as "Healthy". Inside the HHD/SSD tab, it shows Drive 1 (the problem drive) as:Īlso of note, I ran a S.M.A.R.T. Warning - Space of was reaching the limit.Įrror - Storage Pool was degrade, please repair it. The only two errors in the log are these: I didn't remove it while it was booted, it was powered down.

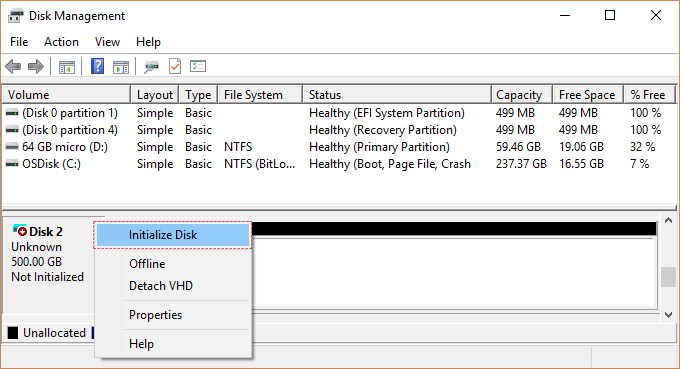

If using SHR2/RAID6 then sure you could add it back in as a repair (because losing 1 disk when you have dual redundancy isn't critical until you lose a second disk), I secure erase them first before adding them Back in so the drive has been fully reset (depends what's wrong with the drive), I take screen shot of both half's the attributes pages to compare before and after (there is a slider bar to get to the bottom half of the attributes page)ĭrive is always erased when adding a drive to a pool (it doesn't delete your pool/volume) Sure you didn't remove it while the nas was on, as that will cause it to be booted from the pool or the nas had already booted the drive from pool after foaling to respond after 8 secondsĭon't recommend repairing a drive back in that has problems when using SHR1/RAID5/1 as you do not have any redundancy anymore and your pool is now in critical state (recommend backing up your data before proceeding re disk replacement) Synology log center > logs should state what happened (not overview)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed